BitFlow, Inc., a division of Advantech, today announced that its Axion-CL Camera Link frame grabber was selected by engineers at NASA’s Goddard Space Flight Center as the ground-based interface device in a system-level Single Event Effects (SEE) test campaign. The work, conducted under a NASA Technical Memorandum and sponsored by the NASA Electronic Parts and Packaging (NEPP) Program, subjected a Princeton Infrared Technologies (PIRT) 1280MVCam InGaAs shortwave infrared (SWIR) camera to one of the most demanding radiation environments achievable on the ground.

Testing was executed at NASA’s Space Radiation Laboratory (NSRL) at Brookhaven National Laboratory. The device under test was the PIRT 1280MVCam – a backside-illuminated, substrate-removed InGaAs focal plane array delivering 1280×1024 resolution with 12 μm pixel pitch and 14-bit analog-to-digital conversion. There, it was irradiated with high-energy heavy ion beams including iron (Fe), silver (Ag), and terbium (Tb) species at energies reaching 575 MeV/nucleon. The objective: qualify a COTS camera system as a candidate for space-based instrumentation in the Aerosol Radiometer for Global Observation of the Stratosphere (ARGOS) program. ARGOS is a compact NASA-supported instrument designed to measure stratospheric aerosols using limb scattering.

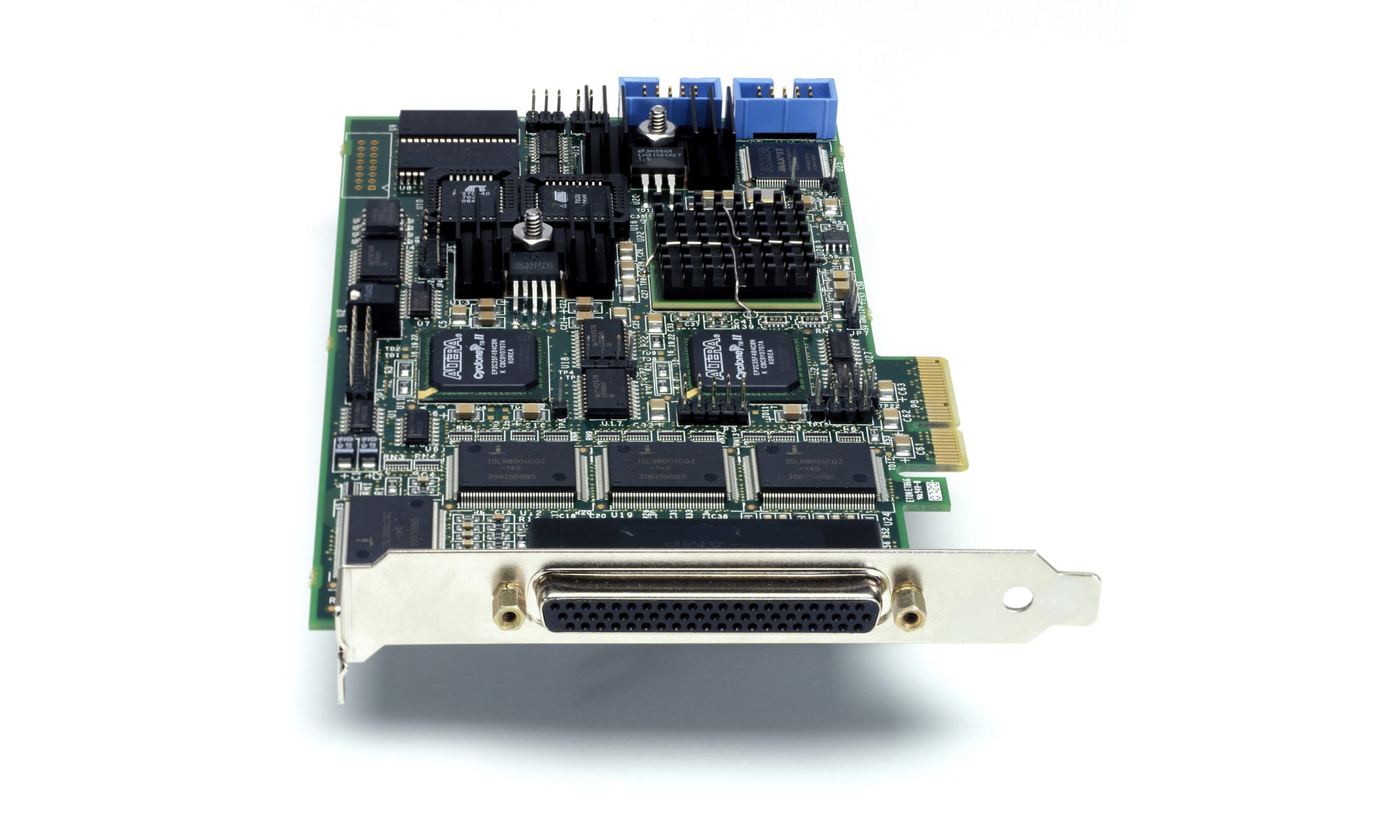

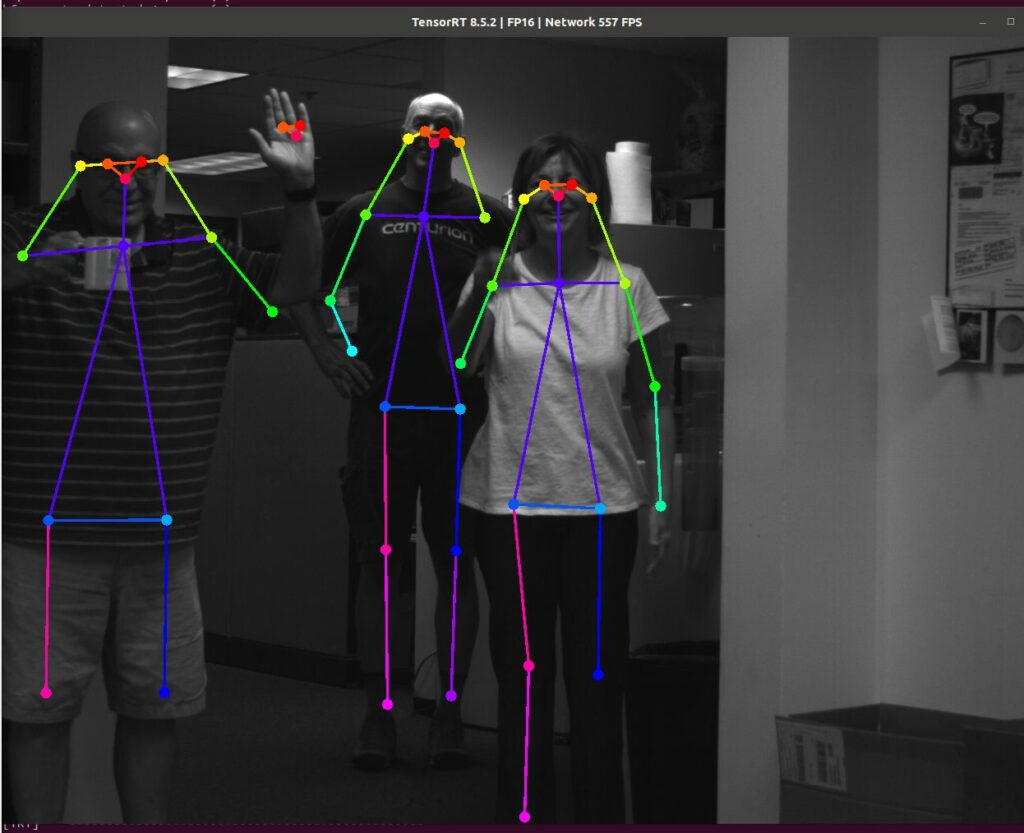

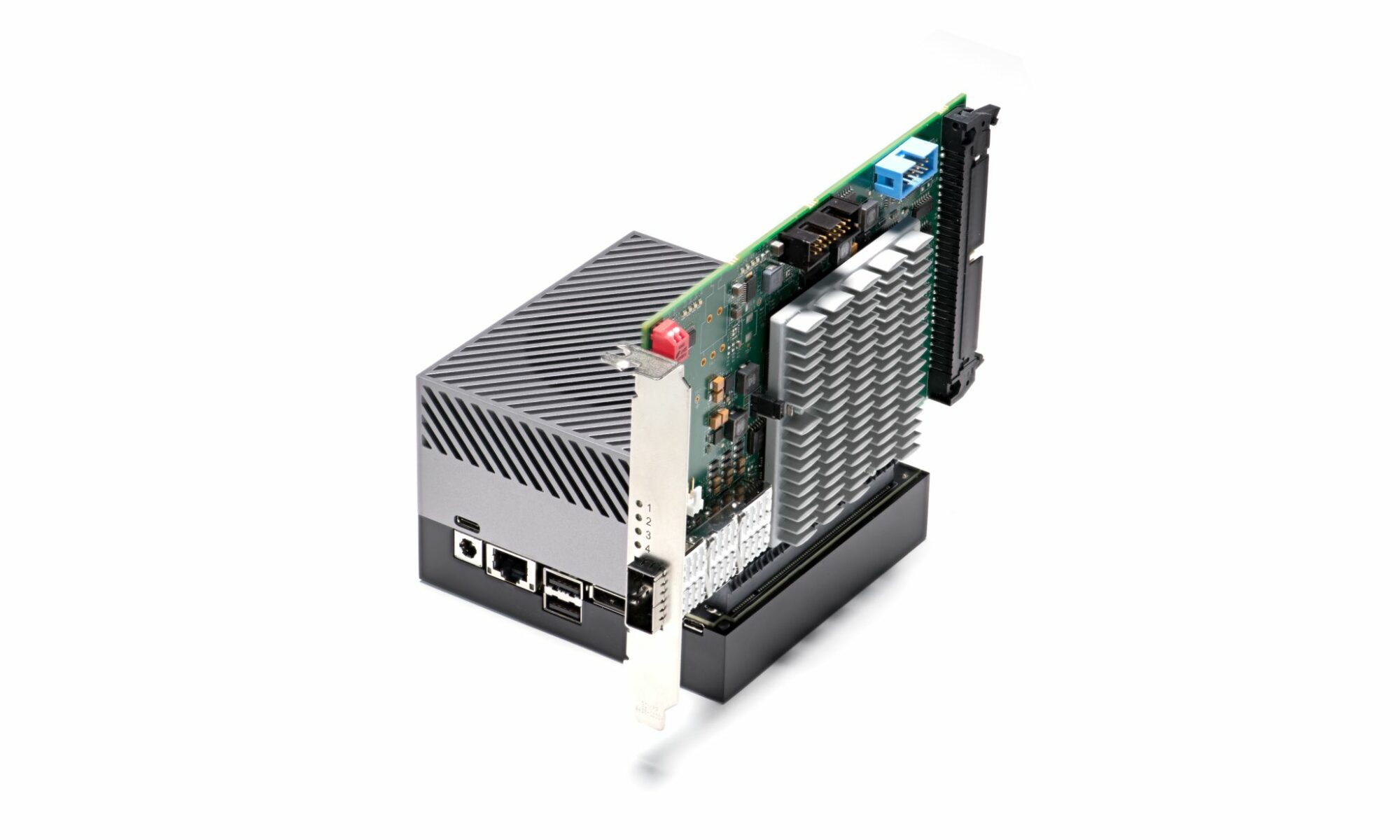

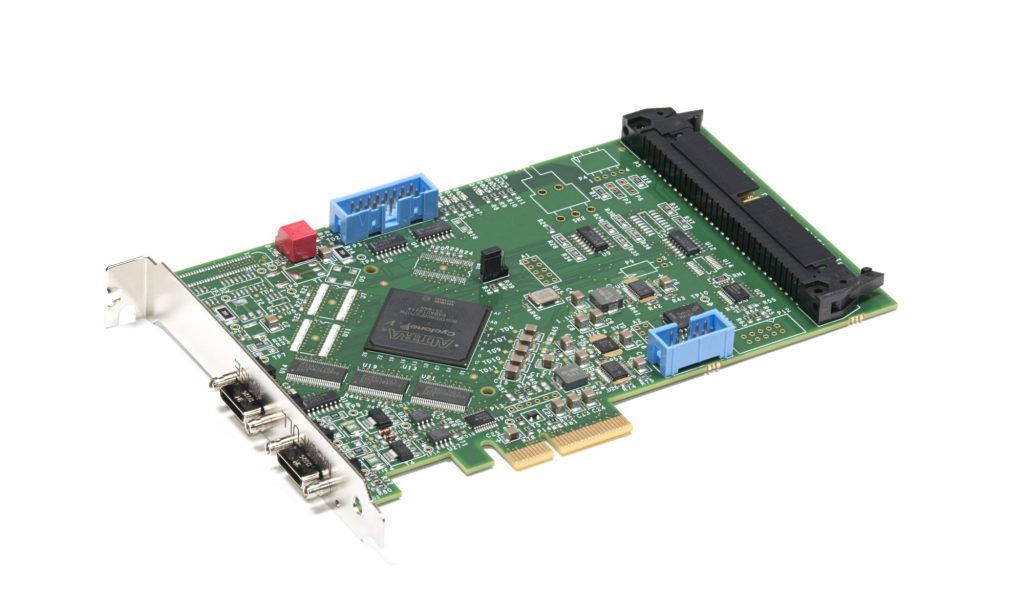

At the center of the test architecture, the BitFlow Axion-CL frame grabber served as the sole communication and data acquisition link between the irradiated camera system and the control computer. Installed in a host computer positioned adjacent to the beam port, the frame grabber interfaced with the PIRT 1280MVCam via the Camera Link standard, enabling continuous real-time image capture and system command transmission throughout each irradiation run, all while the device under test was exposed to ion flux. The Camera Link connection simultaneously carried both image frame data and serial command traffic, allowing NASA engineers to monitor Single Event Functional Interrupt signatures in captured pixel data and query system configuration registers pre- and post-irradiation in a single unified interface.

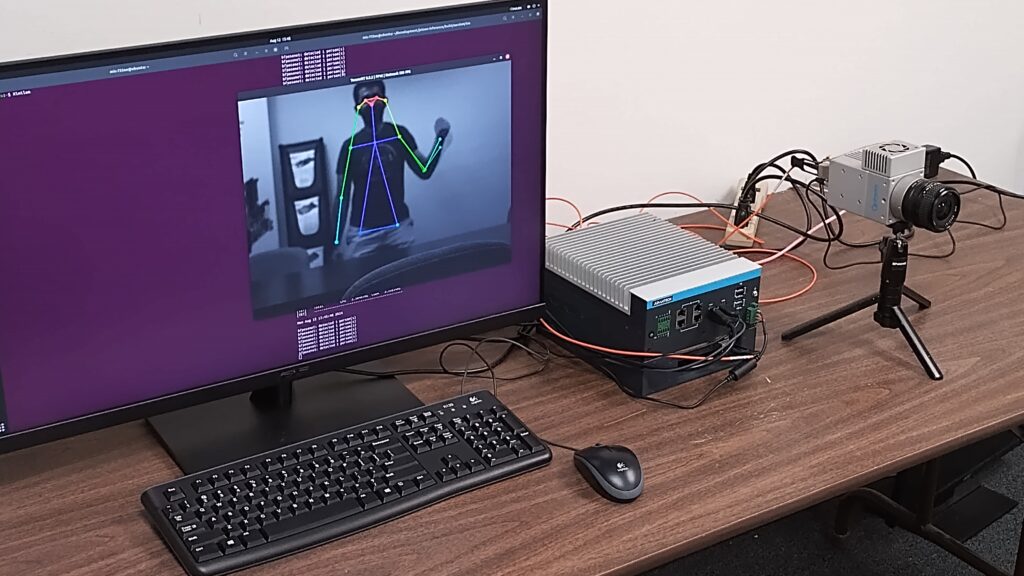

The technical demands of the test environment were exceptional. The camera system was positioned directly in the ion beam, with the BitFlow frame grabber and host computer located in an adjacent shielded cave and controlled remotely via 100-foot Ethernet cable runs and signal extenders. The Camera Link interface had to maintain data integrity across this extended topology while the device under test operated under continuous bombardment from ions with high linear energy transfer (LET) values at the device surfaces across three stacked printed circuit boards.

The test campaign generated actionable engineering intelligence: persistent SEFIs were detected at the lowest tested LET threshold, with system communication failures occurring within minimal fluence exposures, informing NASA’s component screening strategy for future ARGOS mission hardware. Throughout all seven irradiation runs, from the first iron-beam exposures through the final unrecoverable system failure event, the BitFlow frame grabber and Camera Link data path provided uninterrupted acquisition fidelity, enabling the full data set that underpins the published NASA Technical Memorandum.

ARGOS launched on March 15, 2025, aboard a SpaceX Transporter-13 rideshare mission from Vandenberg Space Force Base.

“When NASA engineers design a radiation test environment where every data packet counts and no failure of the acquisition chain is permissible, they turn to BitFlow. Being specified into NASA’s NEPP program test infrastructure is a powerful validation of the reliability and technical depth that BitFlow delivers at the system level.” – Donal Waide, Director of Business Development, iSystems, Advantech.